Exploring the Future of AI in Content Creation

The future of AI in content creation is moving from single-task helpers to connected, multimodal systems that plan, produce, and personalize at scale. The near term looks collaborative. Humans set strategy. AI handles data-heavy work, drafts, and testing. Guardrails like editorial governance, disclosure, and bias checks keep trust intact.

Table of Contents

Content Creation’s Future with AI: Executive Overview

Content creation’s future with AI is best understood as a shift from tools to teamwork. Smart systems are no longer just writing prompts or color-correcting images. They are stitching tasks together, analyzing audience signals, and adapting output in near real time. That changes the tempo and quality of the work, not just the cost.

Picture a Tuesday morning. The Slack ping is fast, the brief is thin, and the deadline is tighter than last week. An AI co-pilot pulls recent search trends, drafts outlines, suggests visuals, and flags factual gaps. The editor adjusts tone. Legal reviews claims. A social agent spins variants for short-form. The loop feels human, only lighter.

Three big ideas anchor AI’s impact on future content creation. Multimodal models unify text, image, audio, and video. Hyper-personalization moves from segments to dynamic individuals. Agentic workflows let AI handle sequences of tasks under human oversight. Each brings speed and scale. Each demands stronger governance to protect brand trust and community wellbeing.

Future of AI in Content Creation: Key Trends Shaping 2025 and Beyond

Multimodal models and agentic workflows

Multimodal AI reads, writes, and designs across formats in one system. That unlocks cohesive campaigns. A single brief can produce copy, a storyboard, social cuts, and alt text without losing the thread. Tools for video generation and editing make higher production values accessible to smaller teams while keeping timelines tight.

Agentic workflows string tasks together. The AI does not just draft a blog. It searches, summarizes, proposes a content calendar, generates three social variants, and prepares A/B test plans. Humans stay in the loop for judgment calls. This approach reduces handoffs and context loss, which is where quality often slips.

Two practical moves help this trend land well. Standardize input briefs so AI sees the right constraints. Then map repeatable sequences. Research. Outline. Draft. Edit. Visual. QA. Publish. Measure. Let AI run the sequence within rules. Editorial signs off at key gates. It feels like a modern newsroom, only with fewer copy-paste traps.

Hyper-personalization and synthetic audiences

Hyper-personalization has moved past basic recommendations. AI now predicts likely intent and shapes messaging, timing, and format to match it. Think subject lines that shift tone, product images that reflect context, and content blocks that adjust based on real-time signals. This keeps relevance high without burning out teams.

Synthetic audiences add a helpful sandbox. These are AI-generated test cohorts built from privacy-safe aggregates, used to trial content before live traffic. They are not a replacement for real users. They help spot tone issues, bias risks, and clarity problems without pushing half-baked work to the timeline. Use them for risk control and speed, not mind-reading.

The payoff is stronger alignment between what people want and what your content says. The tradeoff is added responsibility. Label personalization clearly. Respect consent and local privacy laws. Audit models for representational bias. Trust is earned in the small details, like a callout that avoids stereotypes or a chatbot that knows when to escalate.

AI’s future in content generation: from prompts to autonomous teams

AI’s future in content generation is moving from clever prompting to orchestration. Prompting was a creative phase. Orchestration is operational. Autonomy grows as systems learn your brand style, legal constraints, and performance patterns. The output gets consistent. The oversight needs get sharper.

Autonomous teams of AI agents are arriving in production settings. One agent researches. One drafts. One edits for tone. One prepares social slices. One checks facts against approved sources. Humans approve gates and handle originality and insight. The result is faster cycles and fewer typos. The risk is sameness. Keep an editor focused on angles and originality to counter drift.

AI’s impact on future content creation rests on that balance. Let machines do what machines do well. Let humans shape meaning and moral judgment. People come for clarity and perspective, not just volume.

Why AI Is the Future of Content: Strategic Advantages and Limits

Speed, scale, and cost-efficiency without sacrificing quality

AI reduces the hours spent on repetitive work. It turns research and data analysis into minutes. It generates drafts and versions that would normally take a small army. Used with editorial guardrails, this compresses time to publish while improving consistency across channels.

- Faster research. Summaries and trend scans shave days into hours.

- Versioning at scale. Email and social variants are produced and scored quickly.

- Cross-format production. Text, images, and video stay aligned under the same brief.

- Lower per-asset costs. Teams produce more without ballooning headcount.

Quality does not have to drop. In high-performing teams, editors own the narrative and facts. AI carries the load on data crunching, first-pass drafts, and repetitive refactors. Measured this way, AI delivers speed and scale while content stays thoughtful and accurate.

Risks, bias, and safeguarding brand trust

There are real risks. Data privacy, representational bias, deepfake misuse, and over-automation all threaten trust. Strong governance and active human oversight mitigate these threats. Transparent disclosure about AI involvement also matters. Audiences do not punish smart assistance. They punish secrecy and sloppy claims.

- Privacy. Align with regional rules and treat consent as a design choice, not a footnote.

- Bias. Audit training data and outputs. Correct skew before it ossifies in content.

- Misuse. Ban synthetic personas that impersonate real people or mislead audiences.

- Quality drift. Put fact-checking and source reviews in the workflow. Reward original reporting and thought, not just quantity.

AI Writing Assistants and the Future of AI Writing

SEO and research co-pilots for authoritative content

SEO and research co-pilots help writers move faster and go deeper. They scrape search landscapes, summarize competitor angles, and surface factual gaps. They also flag queries where fresh reporting or subject matter input is needed. This lifts authority and reduces thin content risks.

- Query mapping. See what people ask and where intent differs.

- Source surfacing. Highlight primary sources and credible reports for citation.

- Schema prompts. Prepare structured data and check compliance with page goals.

- Search quality checks. Avoid keyword stuffing and hollow paragraphs. Prioritize helpfulness and clarity.

Long-form drafting, editing, and fact-checking

Writing assistants handle long-form scaffolding well. They propose outlines aligned to the brief, fill in first drafts, and suggest transitions. Editors then refine arguments, add original examples, and verify facts. Fact-checking agents cross-reference approved source libraries and flag uncertain claims for human review.

When used this way, AI writing stays human in voice and judgment, only with less grunt work. That is the future role of AI in content creation. Less drudgery. More thinking.

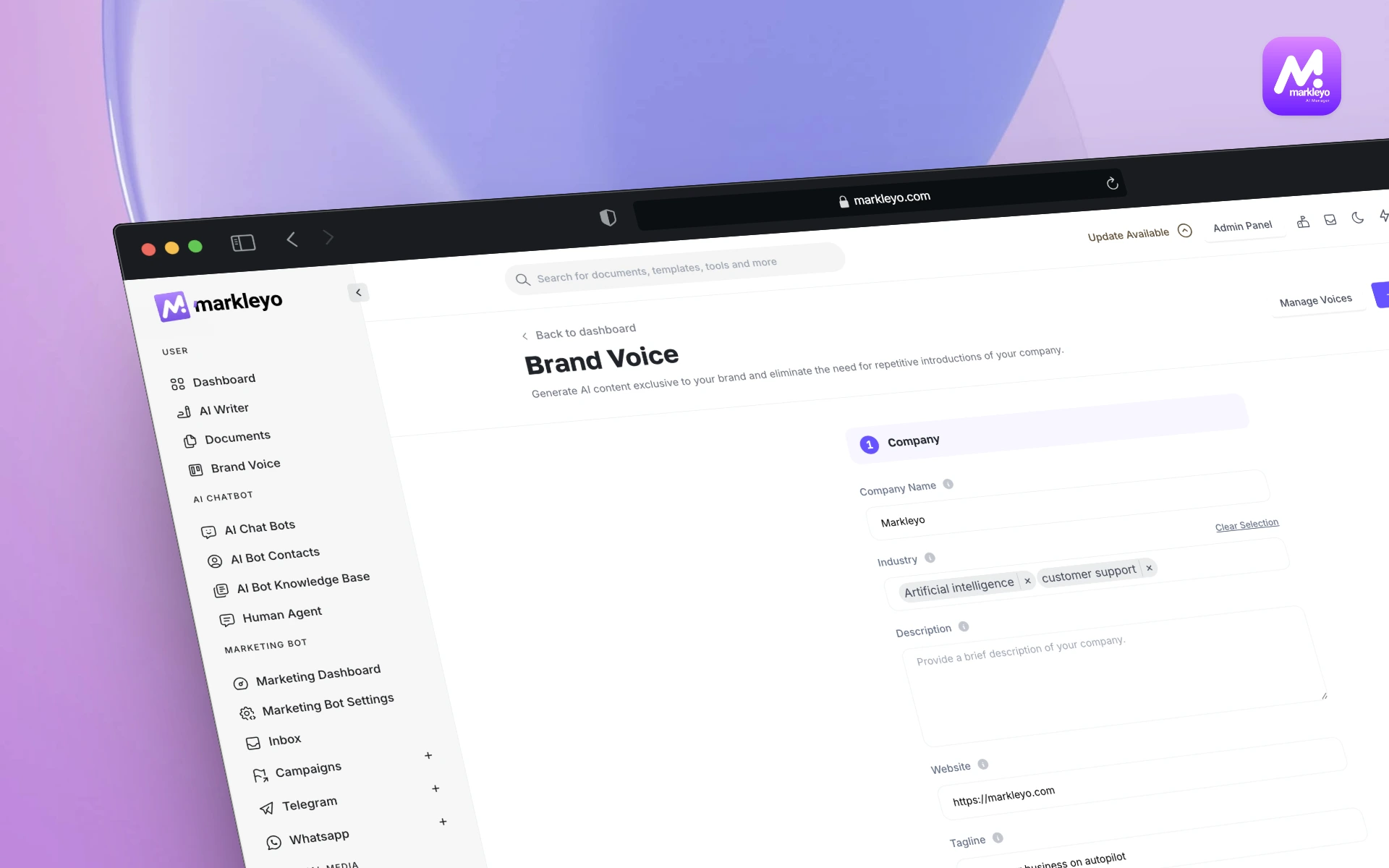

Preserving tone and brand voice at scale

Voice is a trust signal. AI can learn brand style through curated samples, do-not-use lists, and tone ladders. It can then replicate that tone across formats, from long-form to short-form. Editors still guard nuance. For sensitive topics, keep human-only review as a hard rule.

- Style guides. Train on approved content. Reinforce terms to avoid and preferred phrasing

- Tone checks. Use AI to flag shifts in warmth or formality across sections.

- Escalation rules. Topics with safety, finance, or health claims need stricter review paths.

Generative Visuals and the Future of AI Art in Content

Image, video, and design production for campaigns

Generative visuals shrink timelines for creative. Storyboards, B-roll, thumbnails, and layout ideas can be produced quickly. Video tools simplify complex edits, while image systems deliver variants tuned to channel needs. This lets campaigns ship with more polish without waiting weeks for production windows.

- Script to scene. Generate draft scenes and revise visually in hours, not weeks.

- Design systems. Produce social and display variants that stay on brand.

- Alt text and accessibility. AI proposes descriptions that are then refined for clarity.

Rights, licensing, and ethical considerations

Rights need clear rules. Disclose AI-generated visuals. Avoid training on copyrighted data without permission. Advocate licensing that compensates original artists. Treat deepfake risks as brand risks. The ethical bar is not optional. It is audience protection and legal risk control at the same time.

- Disclosure. Label AI involvement clearly wherever visuals are used.

- Licensing. Prefer models and libraries with transparent data use policies.

- Guardrails. Ban synthetic likenesses of real people for commercial content without consent.

AI and the Future of Social Media Content

Short-form generation, scheduling, and A/B testing

Short-form content has become the front door to brand stories. AI supports ideation, scripting, captioning, and scheduling. It also runs structured A/B tests and watches real-time performance. The result is more learning cycles in less time and better alignment with platform norms and audience preferences.

- Idea sprints. Generate hooks and angles that fit platform culture.

- Scheduling. Plan content calendars faster and keep cadence steady.

- Testing. Try small format changes, track effect, and roll forward wins.

Community management and UGC augmentation

AI assists with comment triage, sentiment checks, and moderation. It proposes replies that match tone while routing sensitive cases to humans. It also surfaces promising user-generated content and helps with rights and attribution flows. Community standards stay clear. Care still drives the interaction.

Workflow Design and the Future Role of AI in Content Creation

Human-in-the-loop processes and editorial governance

Human-in-the-loop is the operational backbone. It defines where AI can act, where humans must decide, and how conflicts are resolved. Editorial governance documents topics that require stricter reviews, expected source quality, and labeling rules. Train people to use AI well and to say no when the work needs more time.

- Gate reviews. Set approvals for research, claims, visuals, and publish events.

- QA checklists. Use standardized checklists for facts, style, accessibility, and bias.

- Incident response. Define steps if content causes harm. Correct quickly. Explain clearly.

The 30% rule, attribution, and compliance

The 30 percent rule is a practical guideline. Keep AI-generated content to about 30 percent of the total effort for any asset. Or use AI for no more than 30 percent of the steps in a workflow. Humans own strategy, angle, facts, and final voice. Disclosure and source attribution stay explicit.

- Label AI assistance. Be transparent about where AI helped.

- Attribution. Cite primary sources and approved reports. Avoid vague references.

- Compliance. Align with local privacy rules and platform policies. Document decisions.

AI and the Future of Content Production: Tools, Tech Stack, and ROI

Evaluating platforms, LLMs, and integrations

Stack choices shape quality and speed. Evaluate language models, visual tools, and workflow systems by accuracy, controllability, and privacy posture. Favor integrations that reduce copy-paste work and expose audit trails. A stable stack is a creative stack.

| Platform | Best fit | Strengths | Notes |

| LLM with strong reasoning | Research and long-form | Structured drafts and source mapping | Train on style guides and approved sources |

| Visual generation tool | Storyboards and social | Rapid variants and on-brand layouts | Use clear licensing and disclosure |

| Workflow planner | Cross-team orchestration | Assignments and approvals | Reduce silos and capture audit trails |

| Analytics suite | Impact measurement | Attribution and cohort views | Connect to content IDs and KPIs |

Measuring impact with content analytics and KPIs

ROI is not just more content. It is better content that moves people. Track a small set of KPIs linked to business and community outcomes.

- Search helpfulness. Rankings and on-page engagement for key queries.

- Conversion quality. Leads or sales with lift tied to content exposure.

- Community health. Sentiment, constructive replies, and moderation load.

- Production velocity. Time from brief to publish without quality loss.

- Error rate. Corrections and retractions over time.

Build vs. buy: choosing your AI stack

Build when data privacy and customization are primary. Buy when speed to value and proven workflows matter. Many teams do both. A core bought platform, a few custom adapters, and a secure source library. Make choices based on constraints first, then ambition.

Macro and Cross-Industry Signals Shaping Content Creation’s Future

Future of AI in manufacturing: automation lessons for content ops

Manufacturing teaches a helpful lesson. Quality improves when repeatable tasks are automated and inspections are consistent. Content ops should borrow this. Automate versioning and formatting. Keep human inspections for facts, safety, and tone. The outcome is steadier output and fewer costly fixes.

Future of AI in cars: edge AI and real-time personalization

Cars show how context-aware systems work. Edge AI adapts in real time without sending all data to cloud services. Content can do similar work. Local personalization on devices with privacy controls. Faster, safer, and more respectful of consent. It will feel invisible when done well.

Scenarios for 2030–2050: Prospects of AI in Content Creation

Future of AI in 2050: plausible trajectories for content work

Three plausible paths stand out by 2050. Augmented craft. AI handles production and testing. Human editors shape meaning and ethics. Autonomous production. AI teams run day-to-day content with periodic human audits. Regulated collaboration. Clear rules guide AI use, disclosure, and licensing. The most likely outcome blends all three in different sectors.

A commonly cited macro estimate says AI could add trillions to the global economy by 2030. The media sector would be a major beneficiary. Exact figures vary and need confirmation. The direction is clear. Content production gets faster and more personal. Oversight must keep pace.

AI and the future of content production in a regulated world

Regulation will continue to grow. Expect stronger guardrails on data privacy, algorithmic bias audits, and content disclosure. The safest path is to get ahead of it. Document policies, label AI assistance, and treat audits as part of the craft. Transparency is not a compliance burden. It is a trust builder.

Conclusion

Action plan for teams adopting AI in 2025

- Write policies. Disclosure, data use, licensing, and escalation rules.

- Map workflows. Define gates for research, claims, visuals, and publish.

- Train people. Teach AI prompts, bias audits, and editorial checks.

- Pilot small. Start with short-form and research co-pilots. Measure results.

- Build a source library. Approved primary sources and citations.

- Track KPIs. Link content to business and community outcomes.

Future of AI in points: key takeaways

- Multimodal and agentic AI will shape the next wave of content ops.

- Hyper-personalization grows, with stronger privacy and bias checks.

- AI’s future in content generation is orchestration, not just prompting.

- The 30 percent rule and clear labeling protect brand trust.

- Content creation future influenced by AI depends on better governance.

- Prospects of AI in content creation improve with transparent audits.

Next steps and resources

- Associated Press guidance on AI in newsrooms. See AP Workflow insights for trends and ethics.

- Harvard DCE overview on AI shaping marketing. A practical lens on personalization and governance.

- Visual production tools and use cases. Review industry examples for video and image work.

- Smartcore roundup on 2025 content trends. Helpful prompts and platform views.

Here is the thing. The future of AI in content creation rewards teams that stay thoughtful and fast at the same time. Use AI to clear the path. Keep humans focused on judgment and meaning. Then measure and adjust. That is how your content grows in value, and how your brand earns trust year after year.

Final takeaway. The future of AI in content creation thrives on orchestration, transparency, and human judgment. Start with small pilots, codify your guardrails, and scale what works. When machines get smarter, people need to get clearer. That clarity is your advantage.

FAQ on AI’s Impact on Future Content Creation

What is the future of content creation with AI?

It is collaborative. AI handles data-heavy steps, versioning, and testing. Human creators shape angles, facts, and ethics. Multimodal systems and agentic workflows bring speed and scale. Editorial governance keeps quality and trust steady.

Is AI going to replace content creators?

AI will replace repetitive tasks, not the need for judgment and originality. Content creators who use AI as a co-pilot will outpace those who do not. Roles shift toward strategy, narrative development, and community care.

What is the 30% rule in AI?

It is a practical guideline. Keep about 30 percent of the work done by AI for any asset or workflow. Humans own key decisions, voice, and facts. It reduces quality drift and protects trust. Label AI assistance clearly.

Is content creation worth it in 2025?

Yes. Content creation remains a high-return activity when grounded in helpfulness, clarity, and measured iteration. AI improves speed and learning. Strong governance and smart metrics turn output into impact.